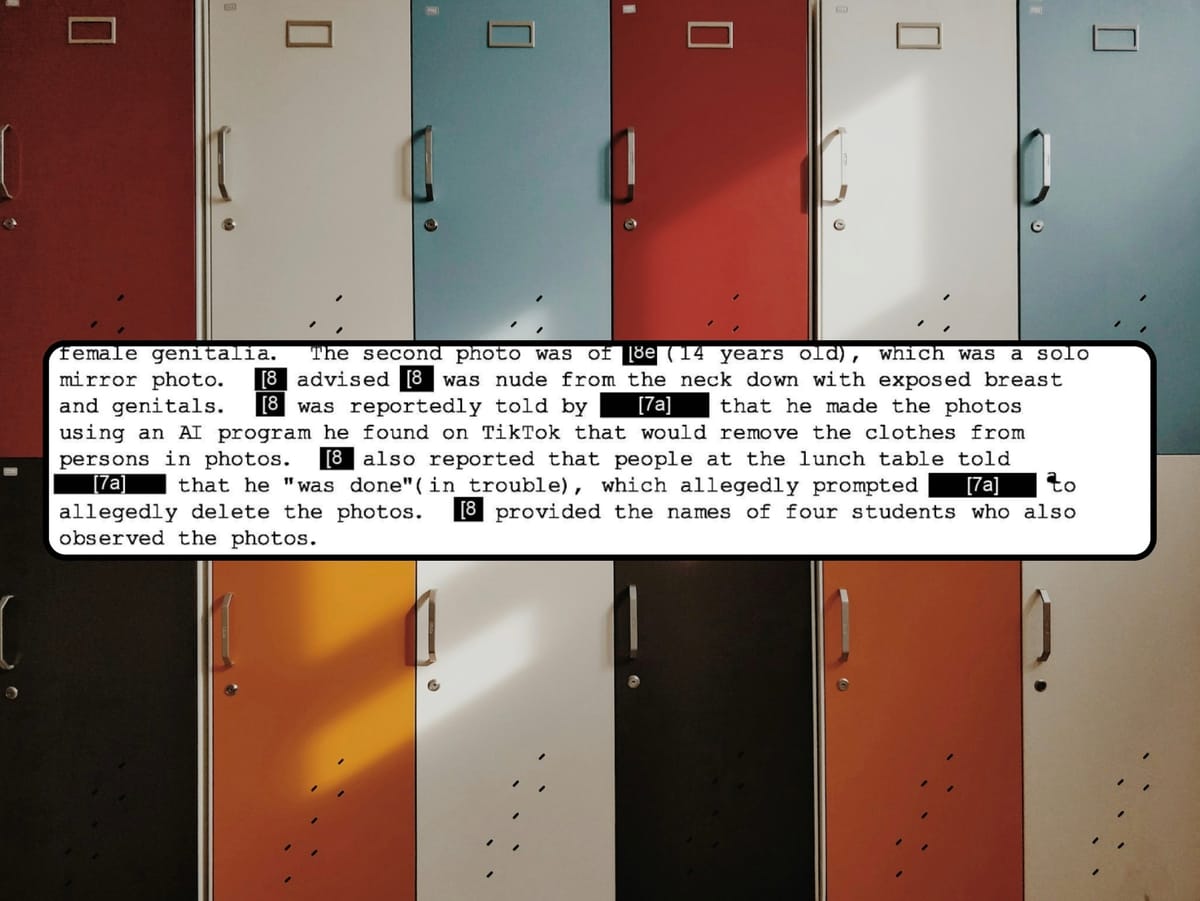

An in-depth police report obtained by 404 Media shows how a school, and then the police, investigated a wave of AI-powered “nudify” apps in a high school.

Wow I am so glad this shit wasn’t around when I was in high school. This sounds horrifying to have to deal with as a student.

Just when I get the feeling kids these days are a little more tolerant than the last generation, NOPE! “Hey Chris! Watch this 5-min long video of our teacher taking a troll cock!”

There was an article 2-3 months back about a small town in Spain being torn apart by this kind of thing and I braced as I knew it couldn’t be long. What a time to be a kid or parent.

Yep. My school years predate social media and mobile phones were pre smartphones. Shit cameras etc.

but the rumour mill was vicious and damaging. Very hard to prove the truth when the lie is so salacious. The more you denied it the more it seemed true.

These poor kids. How do they defend against this shit.

This is going to get pretty damn horrific real fast with Sora coming to general release soon. We need some restrictions and laws on the books.

-

What laws do you want?

-

How would they be enforced?

-

What practical effects would that have?

1.) Germany has civil laws giving a person depicted similar rights as the creator of an image. It is also an criminal offense publishing images, that are designt to damage an persons public image, Those aren’t perfect, mainly because there wording is outdated, but the more general legal sentiment is there.

2.) The police traces the origin through detective work. Social Cycles in schools aren’t that huge so p2p distribution is pretty traceable & publishing sites usually have ip-logs.

A criminal court decides the severity of the punishment for the perpetrator. A civil court decides about the amount of monetary damages, that were caused and have to be compensated by the perp or his/her legal guardian.

People simply forwarding such material can also be liable (since they are distributing copyrighted material) & therefore the distribution can be slowed or stopped.,

3.) It gives the police a reason to investigate, gives victims a tool to stop distribution & is a way to compensate the damages caused to victims

1.) Germany has civil laws giving a person depicted similar rights as the creator of an image. It is also an criminal offense publishing images, that are designt to damage an persons public image, Those aren’t perfect, mainly because there wording is outdated, but the more general legal sentiment is there.

Germany also has laws criminalizing insults. You can actually be prosecuted for calling someone an asshole, say. Americans tend to be horrified when they learn that. I wonder if feelings in that regard may be changing.

AFAIK, it is unusual, internationally, that the English legal tradition does not have defamation (damaging someone’s reputation/public image) as a criminal offense, but only as a civil wrong. I think Germany may be unusual in the other direction. Not sure.

2.) The police traces the origin through detective work. Social Cycles in schools aren’t that huge so p2p distribution is pretty traceable & publishing sites usually have ip-logs.

Ok, the police would interrogate the high-schoolers and demand to know who had the pictures, who made them, who shared them, etc… That would certainly be an important life lesson.

The police would also seize the records of internet services. I’d think some people would have concerns about the level of government surveillance here; perhaps that should be addressed.

How does that relate to encryption, for example? Some services may feel that they avoid a lot of bother and attract customers by not storing the relevant data. Should they be forced?

3.) It gives the police a reason to investigate, gives victims a tool to stop distribution & is a way to compensate the damages caused to victims

That’s what you want to happen. It does not consider what one would expect to actually happen. It’s fairly common for people of high school age to insult and defame each other. Does the German police commonly investigate this?

Germany also has laws criminalizing insults. You can actually be prosecuted for calling someone an asshole, say. Americans tend to be horrified when they learn that. I wonder if feelings in that regard may be changing.

I don’t care about the feelings of Americans reading this. Tbh

Germany is a western liberal democracy, same as the US.

On the other hand I’m horrified, that you seem to equate a quick insult with Deepfake-Porn of Minors.

The police would also seize the records of internet services. I’d think some people would have concerns about the level of government surveillance here; perhaps that should be addressed.

Arguably the unrestricted access of government entities to this kind of data is higher in the US then the EU.

How does that relate to encryption, for example? Some services may feel that they avoid a lot of bother and attract customers by not storing the relevant data. Should they be forced?

There are many entities that store data about you. Maybe the specific service doesn’t cooperate. But what about the server-hoster, maybe the ad-network, maybe the app-store, certainly the payment processor.

If the police can layout how that data can help solve the case, providers should & can be forced by judges to give out that data to an certain extent. Both in the US and the EU

Does the German police commonly investigate this?

Insults? No, those are mostly a civil matter not a criminal one

(Deepfake-) Porn of Minors? Yes certainly

Digitally watermark the image for identification purposes. Hash the hardware, MAC ID, IP address, of the creator and have that inserted via steganography or similar means. Just like printers use a MIC for documents printed on that printer, AI generated imagery should do the same at this point. It’s not perfect and probably has some undesirable consequences, but it’s better than nothing when trying to track down deepfake creators.

Honestly, I can’t tell if this is sarcasm or not.

Samsung’s AI does watermark their image at the exif, yes it is trivial for us to remove the exif, but it’s enough to catch these low effort bad actors.

I think all the main AI services watermark their images (invisibly, not in the metadata). A nudify service might not, I imagine.

I was rather wondering about the support for extensive surveillance.

I can better explain what I don’t want out there, and we’ve already seen some pretty awful shit. This will usher in a completely new generation of CSAM, bullying, harassment, and political sabotage. It seems we should have some protections or penalties in place to cover such things.

You say what you (don’t) want and trust in the experts (IE your representatives) to get us there. That’s reasonable. Suppose no new laws are made, perhaps because the experts think that new laws would do more harm than good, would you continue to trust the experts?

-

I kinda doubt porn would be a problem with Sora, just like it’s not a problem with Dall-e. The model is locked down in openai’s servers and open source model is no where as good yet. Even if there is a comparable downloadable models, it’ll be computationally expensive I doubt teens can freely run it.

open source model is no where as good yet

By the time a law would be adopted, it probably will be. I wouldn’t want to rely on the “kindness” of commercial entities as the sole protector of consumer welfare. We’ve seen how well that works with Google and Facebook.

Even if there is a comparable downloadable models, it’ll be computationally expensive I doubt teens can freely run it.

For now, but with every new tech, hardware efficiency optimization is not too far behind. Especially considering the performance required for training != performance required for running/outputting.

Considering the glacier speeds our government moves, I’d bet on those hardware efficiency optimizations making it out before any significant law gets implemented.

Um… the Taylor Swift porn deepfakes were Dall-e.

Sure - they try to prevent that stuff, but it’s hardly perfect. And not all bullying is easily spotted. Imagine a deepfake of a kid sending a text message, but the bubbles are green. Or maybe they’re smiling at someone they hate.

Also, stable diffusion is more than good enough for this stuff. It’s free and any decent gaming laptop can run that. Takes mine 20 seconds to produce a decent deepfake… I’ve used it to touch up my own photos.

Are current laws against harassment insufficient?

what are you, some kind of pedophile terrorist money-laundering drug dealer??

Correct. Most states’ laws do not envision the situation we are currently seeing, let alone what’s coming.

Check your state. What constitutes harassment, and can you think of harassing things that could be done without violating the law? I can for my state.

Considering the restrictions they put on ChatGPT…

I’m so relieved to be an average or maybe slightly below average looking adult male with an established career. It would be hell being a teenage girl in today’s world.